I have been involved in creating High Availability solutions for our NightWatchman Enterprise products and as part of that work have recently worked with Windows Failover Clustering and NLB clustering in Windows Server 2012. I thought it might be good to share my experiences with implementing these technologies. I've built multi-node failover clusters for SQL Server, Hyper-V, various applications, and Network Load Balancing clusters. The smallest clusters were two node SQL Server clusters. I've built 5 node Hyper-V clusters. The largest clusters I've built were VMware clusters with more than twice that number of nodes.

I will cover the creation of a simple two-node Active/Passive Windows Failover Cluster. In order to create such a cluster it is necessary to have a few items in place, so I figured I should start at the beginning. This blog will address connection to shared storage using the iSCSI initiator. The other installments in this series will be:

- Creating a Windows Cluster: Part 2 – Configuring Shared Disk in the OS

- Creating a Windows Cluster: Part 3 – Creating a Windows Failover Cluster

- Creating a Windows Cluster: Part 4 – Setting up a SQL Cluster

- Creating a Windows Cluster: Part 5 – Adding Applications and Services to the Cluster

- Creating a Windows Cluster: Part 6 – Creating a Network Load Balancing Cluster

Clusters need to have shared storage and that is why I am starting with this topic. You can't build a cluster without having some sort of shared storage in place. For my lab I built out a SAN solution. Two popular methods of connecting to shared disk are iSCSI or FibreChannel (yes, that is spelled correctly). I used iSCSI, mainly because I was using a virtual SAN appliance running FreeNAS that I had created on my vmWare host and iSCSI was the only real option. In a production environment you may want to use FibreChannel for greater performance.

To start I built two machines with Windows Server 2012 and added three network adapters to these prospective cluster nodes. One is for network communications and is on the LAN. The second was for iSCSI connectivity (other methods can be used to connect to shared storage but I used iSCSI as mentioned above). The third was for the cluster Heartbeat network. It is the Heartbeat the monitors cluster node availability so that a failover can be triggered when a node hosting resources becomes unavailable. These three network adapters are all connected to three separate networks and the iSCSI and Heartbeat NICs were attached to isolated segments dedicated to those specific types of communication.

When you are done building out the servers they should be exactly the same. This is because the resources hosted by the cluster (disks, networks, applications) may be hosted by any of the nodes in the cluster and any failover of resources needs to be predictable. If an application requiring .NET Framework 4.5 is running on a node that has .NET Framework 4.5 installed and the other node in the cluster does not then the application will not be able to run if it has to fail over to the other node, rendering your cluster useless.

Configure the network interfaces on the systems. Ensure that they can communicate with the other interfaces on the same network segment (including the shared storage that the iSCSI network will connect to). On the LAN segment include the Gateway and DNS settings because this network is where all normal communications occur and it will need to be routed through your network. For the iSCSI and Heartbeat networks you only need to provide the IP address and the subnet mask. I used a subnet mask of 255.255.255.240 on the iSCSI and Heartbeat networks in my lab setup since there were only two machines. Companies often use very limited subnet sizes for those networks. You do not need to provide a gateway or a DNS server since these networks are single, isolated subnets and their communication will not be routed to any other subnet.

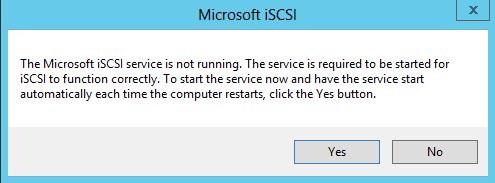

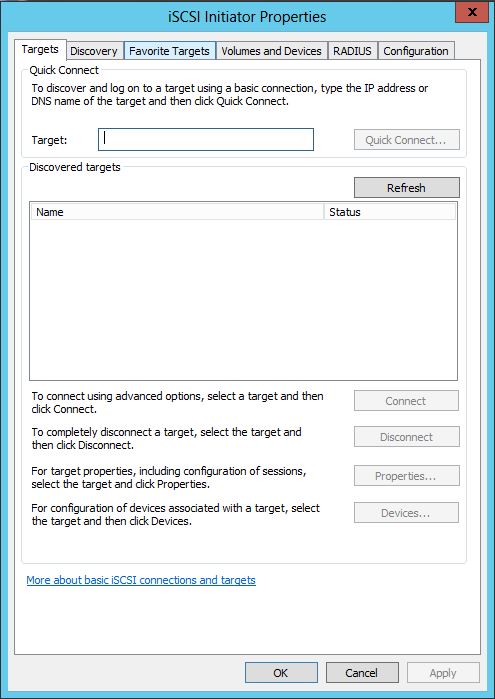

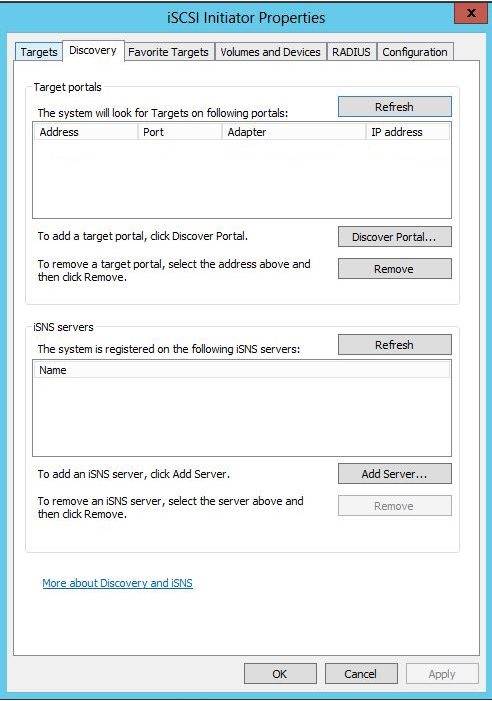

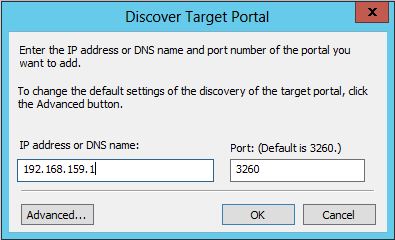

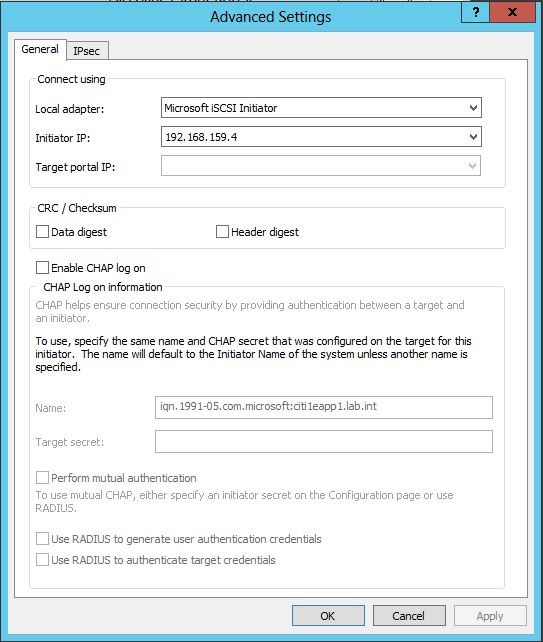

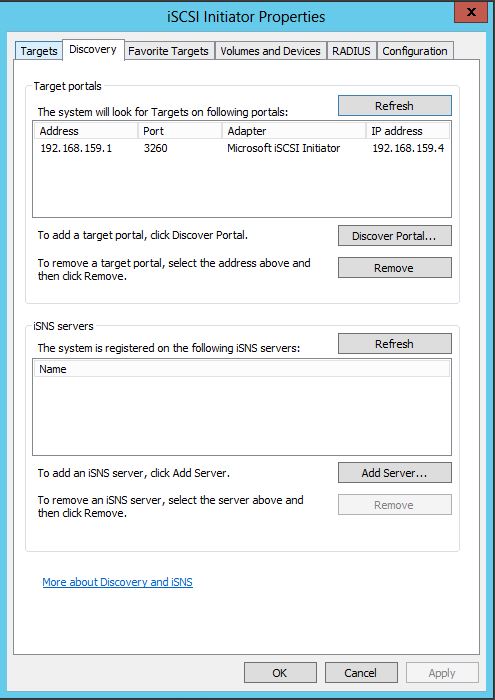

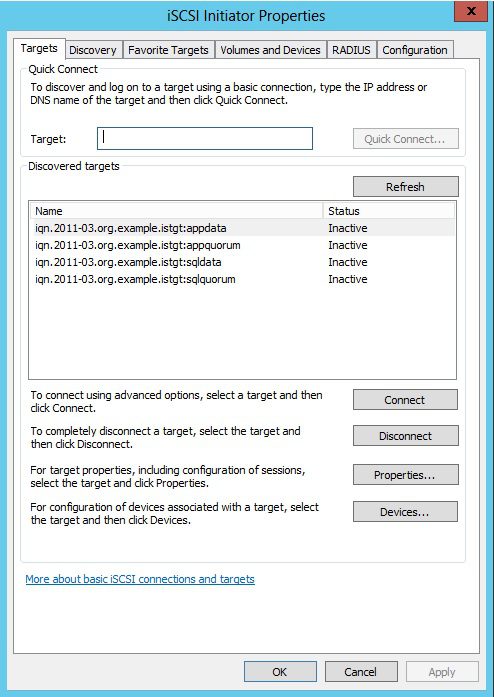

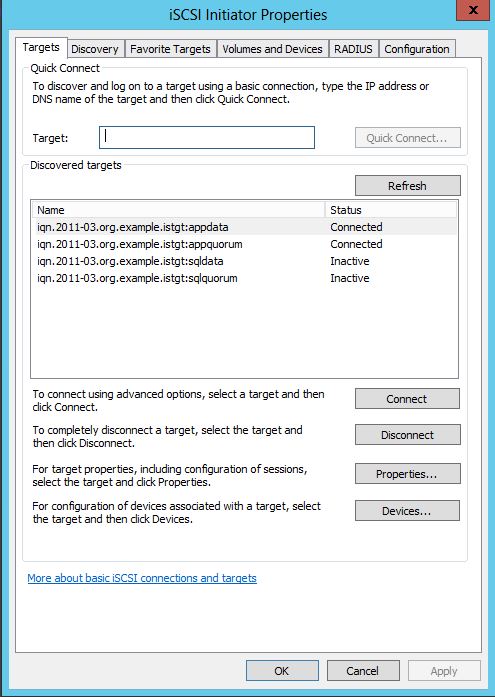

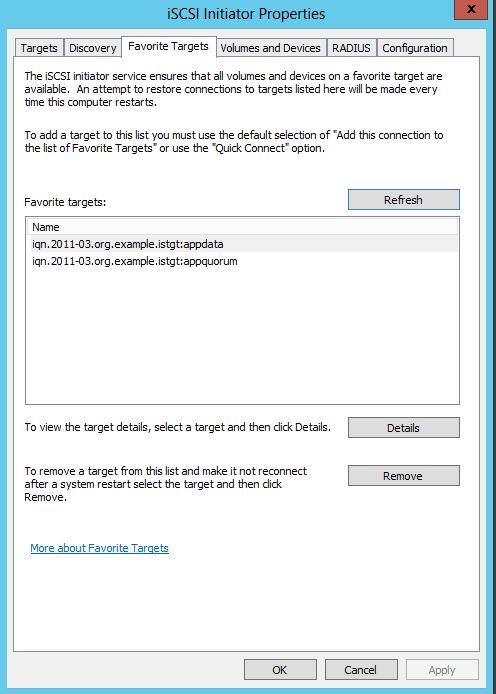

The steps I have used to configure iSCSI are as follows:

The next installment will be on configuring these new disks in the OS as you continue preparing to create your cluster. Until then, I wish you all a great day!