There was once a small village located far in the mountains. It’s too far away from any city and market. People there were living a hard life. The narrow and mountainous roads were risky and dangerous. There were times when roads became inaccessible altogether and there were also times when villagers wouldn't go out at all.

A keg of ale was as expensive as an ounce of gold. Such was the misery of the folks there.

And one glorious day when the sun was shining true and high, there came a wizard of great power came to the village. All the town folk gathered in the main street and greeted the wizard with an open heart. In return, the wizard gave them a magical chant. If someone has a thing and someone else needs that thing, all that needs to be done is utter the magical words and boom!!! You have got a clone of that item. Same as the original one. Generate as many clones as one would need. No need to make the hard and expensive trips to far away cities to get that item again and again. Just get it once and all the town folks can have it (or its clones). And the villagers lived happily ever after.

Now for us computer folks, this story was never that simple nor that magical. But indeed, the magic happened. Now imagine your network as the village of the story and computers as the villagers. You need an item which can be downloaded from a far away server having a congested network with too many connections to it. One must go to that server and download that file(s). Bandwidth and time are the prized commodities here.

It’s painful but still possible to download the file(s) once or twice but more than that is not an efficient utilization of prized bandwidth. Also copying a file over the slow and congested network is time-consuming. Imagine a Malware is spreading in the network with lightning speed. You need to respond faster than it. But alas! Your fix is lying thousands of miles away.

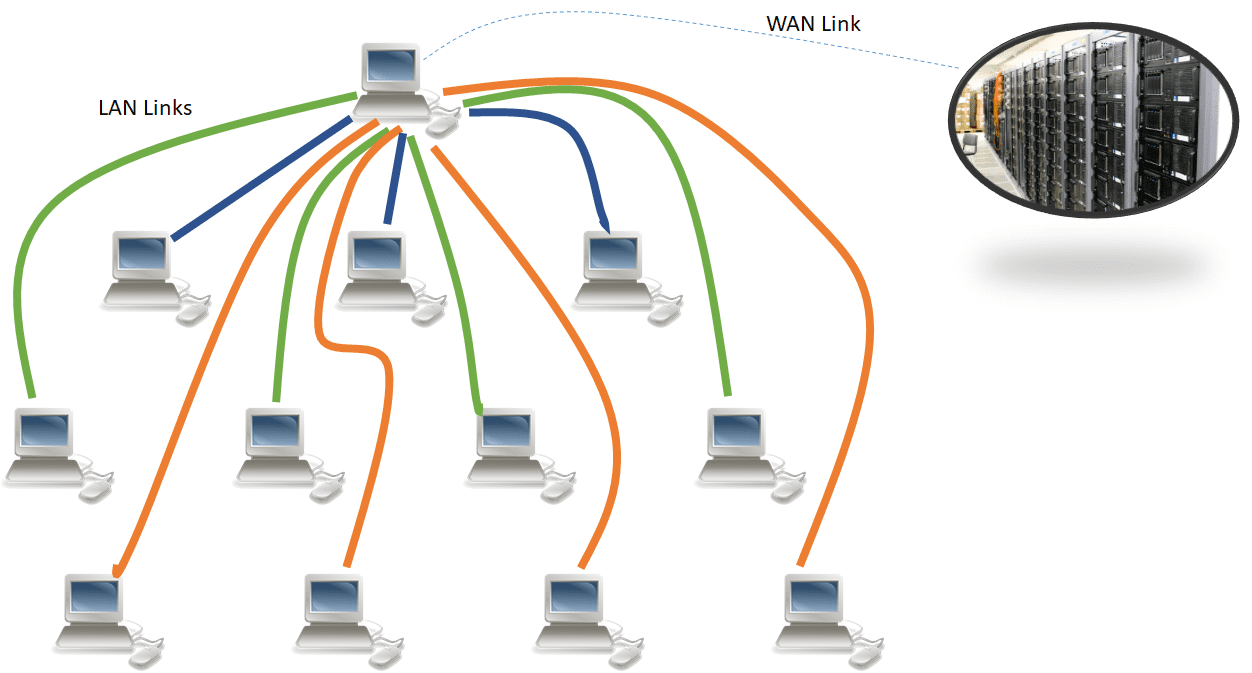

Consider the network of one company who has its research center in the US and its branch network in Australia. They have one slow speed link connecting each other over the Http. They have a patch of upgrade that they need to implement in the branch network. Someone must go to a web page and download the patch using the Http(s).

Consider the company got the WAN link of 100 mbps and LAN link of 10 Gbps. And the size of the patch is 1 GB and we assume that even with all the congestion of video conferencing, audio calls and normal data flow, the user can download the patch in 10 minutes.

To increase the complexity, consider the branch location has 15K devices. So, in an inefficient and legacy way, one will imagine 15 Terabytes on WAN link of 100 mbps taking 104 Days (150000 minutes) just to download the patch. All the WAN bandwidth will go into the downloading patch and all the LAN bandwidth will be wasted.

That’s a nightmare for IT administrators.

And OS migration work… Well, you can imagine the anxiety attacks the IT admins will be getting. There are organizations which download Petabytes of data over the WAN links and even after spending Millions, the OS migration and IT infra maintenance is quite scary. With Windows 10, the situation is getting even scarier. Now Microsoft is working to shorten the release cycles, more updates coming faster than ever and with much more frequency. IT administrators are really in a fix.

- P2P is the savior in all the above mentioned scary scenarios. It is the simple, effective, and time proven. It as simple as downloading one copy locally then spreading it across your network using the local LAN bandwidth. The start may be slow, but then as copies are available locally it will spread like wildfire and become time and bandwidth cost saver if efficiently implemented.

- P2P no longer needs a centralized location to get the data but it can get the data from any neighbor who has a copy of network. Every Peer can download or upload the data. Every Peer in P2P is equal. They have same privileges. They all can participate if they have/want the content.

- P2P is not limited just to file sharing but they can share their resources such as Processing power, hard disk space etc. There is no central system guiding or monitoring these. They all are driving force here.

- P2P can be structured or unstructured, there might be some peers who can connect to all peers but there can also be peers who can connect only to their neighbors. So, there is no fixed way to create a P2P network.

For file sharing, though P2P is fast and extremely scalable but sometimes it also become a bottleneck when a software starts doing P2P and consumes all the available bandwidth. For IT administrators, there are many useful systems management suites such as Microsoft SCCM.

Using SCCM administrators can easily and more effectively put the patches in place but even SCCM has some limitations.

1E Nomad is true win-win for companies and IT admins both. It’s one efficient, lightweight, secure, and encrypted software. It not only provides all the power of the Peer to Peer but it does it in such an efficient manner that now updates and patches can be deployed in minutes and hours and not weeks and months. It also scales beautifully and really cost effective. It doesn’t need any extra hardware to support it and can run silently in the background. In fact, most of the user won’t even notice that something is doing wonders for their machines and desktops in the background. It is intelligent and controls itself from overusing or choking the bandwidth (P2P’s weakness). It estimates the usage of bandwidth and throttle itself accordingly. That’s what makes it so unnoticeable.

For the same hypothetical company, imagine the same patch being distributed and downloaded over the branch location in a week or may be less. That’s a cost saving of almost 97 days!

A silent hero, standing ready…